artificial intelligence: A type of knowledge-based decision-making exhibited by machines or computers. The term also refers to the field of study in which scientists try to create machines or computer software capable of intelligent behavior.

autonomous vehicle: A driverless car, submarine, airplane or other autonomous vehicle. These pilot themselves based on instructions that have been programmed into their computer guidance system, but sometimes subject to reprogramming midway to their destination.

computer program: A set of instructions that a computer uses to perform some analysis or computation. The writing of these instructions is known as computer programming.

electrical engineer: An engineer who designs, builds or analyzes electrical equipment.

gauge: A device to measure the size or volume of something. For instance, tide gauges track the ever-changing height of coastal water levels throughout the day. Or any system or event that can be used to estimate the size or magnitude of something else. (v. to gauge) The act of measuring or estimating the size of something.

hue: A color or shade of some color.

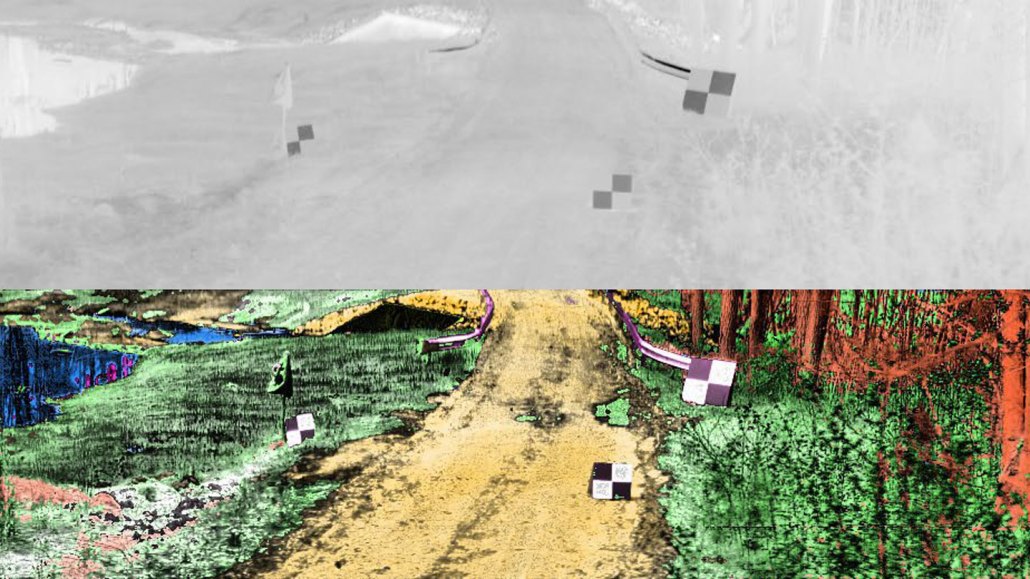

infrared: A type of electromagnetic radiation invisible to the human eye. The name incorporates a Latin term and means “below red.” Infrared light has wavelengths longer than those visible to humans. Other invisible wavelengths include X-rays, radio waves and microwaves. Infrared light tends to record the heat signature of an object or environment.

innovation: (v. to innovate; adj. innovative) An adaptation or improvement to an existing idea, process or product that is new, clever, more effective or more practical.

navigate: To find one’s way through a landscape using visual cues, sensory information (like scents), magnetic information (like an internal compass) or other techniques.

theoretical physicist: A scientist who studies the nature and properties of matter and energy through math — usually performed by computers. Their analyses or assessments will be based on already existing knowledge of how things behave. Such theoretical research tends to predict how or what will occur for some specified series of conditions. Experimental testing or observations of natural systems will then be needed to confirm such predictions.

radar: A system for calculating the position, distance or other important characteristic of a distant object. It works by sending out periodic radio waves that bounce off of the object and then measuring how long it takes that bounced signal to return. Radar can detect moving objects, like airplanes. It also can be used to map the shape of land — even land covered by ice.

robot: A machine that can sense its environment, process information and respond with specific actions. Some robots can act without any human input, while others are guided by a human.

self-driving car: Also known as a driverless car or autonomous vehicle. These cars pilot themselves based on instructions that have been programmed into their computer guidance system.

sonar: A system for the detection of objects and for measuring the depth of water. It works by emitting sound pulses and measuring how long it takes the echoes to return.

system: A network of parts that together work to achieve some function. For instance, the blood, vessels and heart are primary components of the human body's circulatory system. Similarly, trains, platforms, tracks, roadway signals and overpasses are among the potential components of a nation's railway system. System can even be applied to the processes or ideas that are part of some method or ordered set of procedures for getting a task done.

thermal: Of or relating to heat. (in meteorology) A relatively small-scale, rising air current produced when Earth’s surface is heated. Thermals are a common source of low level turbulence for aircraft.

wavelength: The distance between one peak and the next in a series of waves, or the distance between one trough and the next. It’s also one of the “yardsticks” used to measure radiation. Visible light — which, like all electromagnetic radiation, travels in waves — includes wavelengths between about 380 nanometers (violet) and about 740 nanometers (red). Radiation with wavelengths shorter than visible light includes gamma rays, X-rays and ultraviolet light. Longer-wavelength radiation includes infrared light, microwaves and radio waves.

weather: Conditions in the atmosphere at a localized place and a particular time. It is usually described in terms of particular features, such as air pressure, humidity, moisture, any precipitation (rain, snow or ice), temperature and wind speed. Weather constitutes the actual conditions that occur at any time and place. It’s different from climate, which is a description of the conditions that tend to occur in some general region during a particular month or season.