Neuroscientists use brain scans to decode people’s thoughts

The method captured the key ideas of stories people heard — but only if they let it

With brain scans and a powerful computer model of language, scientists detected the main ideas from stories that people heard, thought or watched.

DrAfter123/Data Vision Vectors/Getty Images Plus

Share this:

- Share via email (Opens in new window) Email

- Share on Facebook (Opens in new window) Facebook

- Share on X (Opens in new window) X

- Share on Pinterest (Opens in new window) Pinterest

- Share on Reddit (Opens in new window) Reddit

- Share to Google Classroom (Opens in new window) Google Classroom

- Print (Opens in new window) Print

Like Dumbledore’s wand, a scan can pull long strings of stories straight out of a person’s brain. But it only works if that person cooperates.

This “mind-reading” feat has a long way to go before it can be used outside the lab. But the result could lead to devices that help people who can’t talk or communicate easily. The research was described May 1 in Nature Neuroscience.

“I thought it was fascinating,” says neural engineer Gopala Anumanchipalli. “It’s like, ‘Wow, now we are here already.’” Anumanchipalli works at the University of California, Berkeley. He wasn’t involved in the study, but he says, “I was delighted to see this.”

Scientists have tried implanting devices in people’s brains to detect thoughts. Such devices were able to “read” some words from people’s thoughts. This new system, though, requires no surgery. And it works better than other attempts to listen to the brain from outside of the head. It can produce continuous streams of words. Other methods have a more constrained vocabulary.

The researchers tested the new method on three people. Each person lay inside a bulky MRI machine for at least 16 hours. They listened to podcasts and other stories. At the same time, functional MRI scans detected changes in blood flow in the brain. These changes indicate brain activity, though they are slow and imperfect measures.

Alexander Huth and Jerry Tang are computational neuroscientists. They work at the University of Texas at Austin. Huth, Tang and their colleagues collected the data from the MRI scans. But they also needed another powerful tool. Their approach relied on a computer language model. The model was built with GPT — the same one that enabled some of today’s AI chatbots.

Combining a person’s brain scans and the language model, the researchers matched patterns of brain activity to certain words and ideas. Then the team worked backwards. They used brain activity patterns to predict new words and ideas. The process was repeated over and over. A decoder ranked the likelihood of words appearing after the previous word. Then it used the brain activity patterns to help pick the most likely. Ultimately it landed on the main idea.

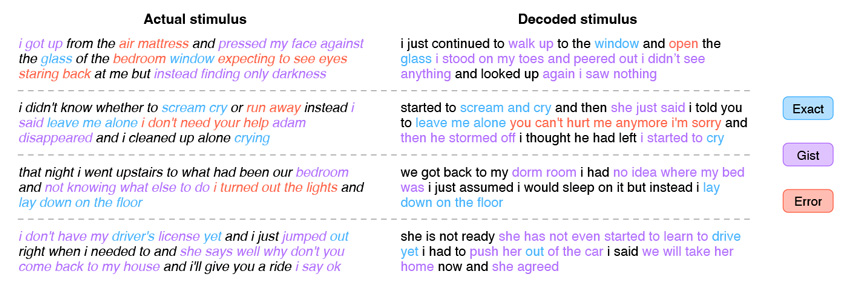

“It definitely doesn’t nail every word,” Huth says. The word-for-word error rate was pretty high, about 94 percent. “But that doesn’t account for how it paraphrases things,” he says. “It gets the ideas.” For instance, a person heard, “I don’t have my driver’s license yet.” The decoder then spat out, “She has not even started to learn to drive yet.”

Such responses made it clear that the decoders struggle with pronouns. The researchers don’t yet know why. “It doesn’t know who is doing what to whom,” Huth said in an April 27 news briefing.

The researchers tested the decoders in two other scenarios. People were asked to silently tell a rehearsed story to themselves. They also watched silent movies. In both cases, the decoders could roughly re-create stories from people’s brains. The fact that these situations could be decoded was exciting, Huth says. “It meant that what we’re getting at with this decoder, it’s not low-level language stuff.” Instead, “we’re getting at the idea of the thing.”

“This study is very impressive,” says Sarah Wandelt. She is a computational neuroscientist at Caltech. She was not involved in the study. “It gives us a glimpse of what might be possible in the future.”

The research also raises concerns about eavesdropping on private thoughts. The researchers addressed this in the new study. “We know that this could come off as creepy,” Huth says. “It’s weird that we can put people in the scanner and read out what they’re kind of thinking.”

But the new method isn’t one-size-fits-all. Each decoder was quite personalized. It worked only for the person whose brain data had helped build it. What’s more, a person had to cooperate for the decoder to identify ideas. If a person wasn’t paying attention to an audio story, the decoder couldn’t pick that story up from brain signals. Participants could thwart the eavesdropping effort by ignoring the story and thinking about animals, doing math problems or focusing on a different story.

“I’m glad that these experiments are done with a view to understanding the privacy,” Anumanchipalli says. “I think we should be mindful, because after the fact, it’s hard to go back and put a pause on research.”