This crumb-sized camera uses artificial intelligence to get big results

Its unique lens and approach to image processing are behind its great photos

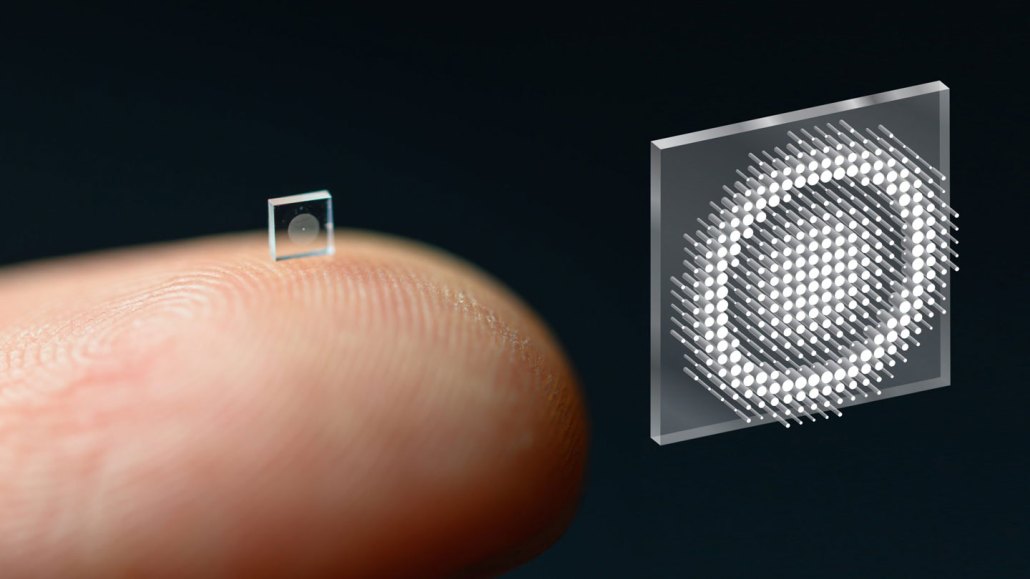

The new, miniaturized camera lens sits on the tip of a finger (left). It bends light with 1.6 million tiny metal rods instead of curved glass. The pattern formed by the rods is visible in a magnified image of the lens (right).

Princeton University

Share this:

- Share via email (Opens in new window) Email

- Share on Facebook (Opens in new window) Facebook

- Share on X (Opens in new window) X

- Share on Pinterest (Opens in new window) Pinterest

- Share on Reddit (Opens in new window) Reddit

- Share to Google Classroom (Opens in new window) Google Classroom

- Print (Opens in new window) Print

Say cheese! Researchers have developed a tiny camera that takes amazingly clear photos. Just don’t sneeze while it’s in your hand. At the size of a coarse grain of salt, you may never find it again.

Smaller cameras could mean lighter smartphones and new James Bond–style gadgets. But that’s not all. Cameras on this scale could swim through the body, hitch a ride on an insect, scope out your brain or monitor hostile environments. And those are just a few of the possibilities.

How do you pack that much picture-taking power into something the size of a crumb? It takes a “radically different approach” to making a camera lens, says Felix Heide. He’s a computer scientist at Princeton University in New Jersey. His lab developed the camera with colleagues from the University of Washington in Seattle. The team shared its work in Nature Communications in November.

Cameras have two main parts: a lens and a sensor. The lens bends incoming light onto the sensor, where the image is recorded. Over the last few decades, sensors have gotten smaller and smaller. But lenses are another story. “Lenses haven’t been miniaturized at all,” Heide says. Most are designed little different today than they were in the 1800s.

Lenses are traditionally made by stacking curved pieces of glass or plastic. A curved surface bends light passing through. How the light bends depends on the curvature. A lens can be a single piece of glass. To bend light in more ways, you can stack several of them together.

Heide’s team took a totally different approach. They made a lens from a metasurface. These surfaces are super thin, human-made materials patterned with tiny structures. The structures are so small they’re measured in billionths of a meter (nanometers). Similar but slightly thicker materials are called metamaterials.

“Metamaterials interact with light in entirely new ways not found in nature,” explains Natalia Litchinitser. She’s an electrical engineer at Duke University in Durham, N.C. How they interact depends on the structures ― their shape, density, pattern and what they’re made from. This is also true of metasurfaces.

With the right design, metasurfaces can become miniature lenses or mirrors. That means they can squeeze into tiny spaces and reveal things people haven’t seen before. Another plus? They can be made for pennies. That’s because you can make them using the process developed for producing computer chips.

Still, Litchinitser cautions, this technology is relatively new and has its limits. For example, metasurface lenses often produce fuzzy pictures or pictures with colored halos around the edges. Litchinitser credits the new camera’s creators for developing computer programs to overcome those problems. For these programs, the researchers turned to artificial intelligence, or AI.

‘Learning’ to get better pictures

When TikTok or Snapchat recognizes your face in a photo and applies a filter, that’s AI at work. The more you use these features, the better their machine learning gets at identifying you. That’s because these programs learn from their mistakes.

With a similar approach, Heide’s team tackled two key challenges for metasurface cameras: lens design and image quality. To get a high-quality image they needed a metasurface with more than 1.5 million metal structures. But how should the structures be arranged to get the best picture? It would take far too much time and computing power to explore every possibility.

Luckily, there’s a shortcut. The team wrote a computer program that simulated light traveling through a lens and the picture it created. Then the program tweaked the lens design and ran the simulation again. It compared the new picture to the previous one and judged which was better. As the program cycled through different possibilities, it learned a bit each time about how best to tweak the design and get the best picture.

But even a perfect lens design won’t deliver crisp, clear pictures unless you tackle another challenge. No metasurface lens can perfectly focus all the light rays passing through. That introduces blurriness. To deal with this, the team wrote a second computer program. This one looked at images of a simulated scene. The images were blurry in different ways. By cycling through the images and comparing them to the original scene, the program learned to correct for each type of blurriness. The result: An image-processing program that made pictures sharp and in full focus.

A lens just 0.5 cubic millimeters (a 300-millionth of a cubic inch) in size now rivals the quality of a traditional camera lens 550,000 times that volume. Just like its much bulkier predecessor, the new camera’s pictures are crisp, colorful and capture a wide field of view. You could even take a selfie with it. For now, however, the team is being extra careful and keeping it away from noses.

Ethan Tseng is a computer-science graduate student in Heide’s lab. He co-led the project with a student from the University of Washington. “We’re living in very exciting times,” Tseng says. “We’re seeing all kinds of cool tech that can completely change the way we originally thought of building things.”

This is one in a series presenting news on technology and innovation, made possible with generous support from the Lemelson Foundation.