New technology can get inside your head. Are you ready?

Some experts argue that such brain implants raise serious privacy issues

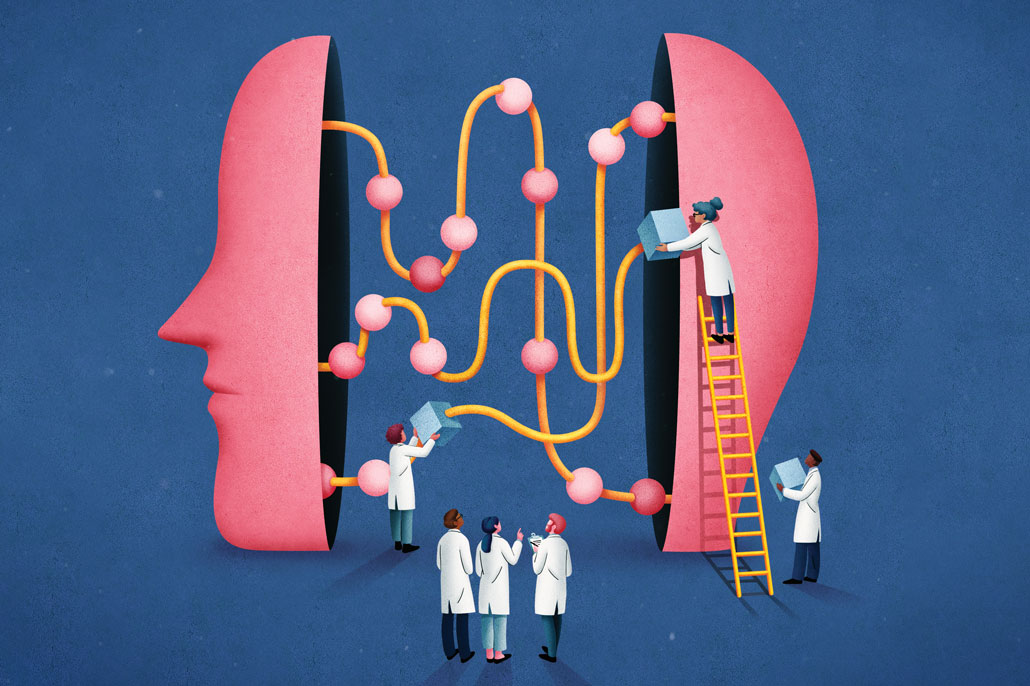

Ethicists, scientists and everyday people are grappling with the implications of new technologies that let outsiders inside the mind.

Julia Yellow

Share this:

- Share via email (Opens in new window) Email

- Share on Facebook (Opens in new window) Facebook

- Share on X (Opens in new window) X

- Share on Pinterest (Opens in new window) Pinterest

- Share on Reddit (Opens in new window) Reddit

- Share to Google Classroom (Opens in new window) Google Classroom

- Print (Opens in new window) Print

Gertrude the pig rooted around a straw-filled pen. She gave no notice to the cameras and onlookers. She also ignored the 1,024 electrodes eavesdropping on her brain. Each time Gertrude’s snout found a treat in a researcher’s hand, a musical jingle sounded. It signaled activity in nerve cells that control her snout.

Those beeps were part of a big August 28, 2020 reveal of the nerve-watching tech by Neuralink. It’s a company based in San Francisco, Calif. “In a lot of ways, it’s kind of like a Fitbit in your skull with tiny wires.” Or that’s how Elon Musk described his company’s new technology that day.

Neuroscientists study the brain. For decades, many of them have been recording nerve-cell activity in animals. But Musk and others are reaching to do far more. They want to enable us to perfectly save and relive our favorite memories. Or maybe we’ll replay video games in our heads. One day we may even beckon cars with our minds, Jedi–style.

Some scientists called Gertrude’s introduction just an attention-grabbing stunt. But Musk, the maker of Tesla cars, has surprised people before. “You can’t argue with a guy who built his own electric car and sent it to orbit around Mars,” says Christof Koch. He’s a neuroscientist at the Allen Institute for Brain Science in Seattle, Wash.

Advances in brain tech are coming quickly. They also span a variety of approaches. Some could lead to external headsets that may tell the difference between hunger and boredom. Electrodes implanted in the brain might help translate our intentions to speak into real words. Or bracelets may be on the horizon that use nerve impulses to type for you — no keyboard required.

Today, paralyzed people are already testing such technologies. Called brain-computer interfaces, they translate intentions into action. With brain signals alone, these people have been able to shop online, communicate — even use a prosthetic arm to sip from a cup. But the ability to hear brain chatter, understand it and perhaps even modify it has the potential to change and improve people’s lives. And this neural eavesdropping may help in ways that go well beyond medicine.

Such technologies also raise questions. Chief among them: Who will get access to our brains and for what purpose.

Reading thoughts

Researchers and doctors have long sought to be able to pull information from someone’s brain — without relying on speaking, writing or typing. It could help people whose bodies can no longer move or speak. Implanted electrodes can record signals in movement areas of the brain. This has allowed some people to control robotic prostheses.

In January 2019, researchers at Johns Hopkins University implanted electrodes in the brain of Robert “Buz” Chmielewski. A surfing accident had left the man unable to use his arms or legs. Using signals from both sides of his brain, Chmielewski was able to controll two prosthetic arms. With them, he could use a fork and a knife at the same time to feed himself. Researchers announced in a press release late last year.

Other researchers decoded speech from the brain signals of a paralyzed man who is unable to speak. This man saw the question “Would you like some water?” on a computer screen. He then responded with the text, “No, I am not thirsty.” He got a computer to print the message using only signals from his brain. This work, described at a November 19, 2020 symposium hosted by Columbia Unversity, was just one example of the advances in linking brains to computers.

“Never before have we been able to get that kind of information without interacting with [other parts of the body],” says Karen Rommelfanger. She’s a neuroethicist at Emory University in Atlanta, Ga. Speaking, sign language and writing, for instance, all require several decision-making steps, she says.

So far, efforts to pull information from the brain generally require bulky equipment, she notes. They’ve also needed heavy computing power. Most importantly, they required a willing participant. At least for now, any efforts to break into your mind could easily be halted by closing your eyes or even getting sleepy.

What’s more, Rommelfanger says, mind reading’s goal is too vague to be a concern. “I don’t believe that any neuroscientist knows what a mind is or what a thought is,” she says. As a result, she says, “I am not concerned about mind reading” — at least using the technologies that exist now.

But they may change quickly. “We are getting very, very close” to having the ability to pull private information from people’s brains, says Rafael Yuste. He’s a neurobiologist who works at Columbia University in New York City. Yuste notes that studies have begun to decode what someone is looking at and what words she might hear.

Scientists from Kernel, a neurotech company near Los Angeles, Calif., have invented a helmet. Just hitting the market, it works as a portable scanner. It highlights activity in certain areas of the brain.

For now, companies have only our behavior — our likes, our clicks, our purchase histories — to build eerily accurate profiles of us. And we let them. Predictive algorithms make good guesses. But they are only guesses. “With this neural data gleaned from neurotechnology, it may not be a guess anymore,” Yuste says. Companies will have the real thing, straight from your brain.

In the future, techologies may even be able to reveal subconscious thoughts, Yuste says. “That is the ultimate privacy fear — because what else is left?”

Next step: Altering behaviors?

Technology already exists to read brain activity — and change it. Such tools can detect a coming seizure in someone with epilepsy, for instance, and prevent it. Or it might stop a tremor before it takes hold. Researchers are even testing related systems for obsessive-compulsive disorder, addiction and depression. But the power to precisely change brain activity — and with it, someone’s behavior — raises disturbing questions.

The desire to change a person’s mind, is not new, notes Marcello Ienca. He’s a bioethicist in Switzerland at ETH Zurich. Winning hearts and minds is at the core of advertising and politics. Persuading people is what debates are all about. Technology capable of changing your brain’s activity with just a subtle nudge, however, brings “manipulation risks to the next level,” Ienca says.

Science can’t do that yet. But in a hint of what may be possible, researchers have already created visions inside mouse brains. They used a technique called optogenetics. It uses light to stimulate small groups of nerve cells. In this way, the researchers made mice “see” lines that weren’t there. Those mice behaved exactly as if their eyes had actually seen the lines, says Yuste, whose research group performed some of these experiments. “Puppets,” he calls the affected mice.

All of these new advances come against a backdrop of technologies we now find very comfortable.

We allow our smartphones to monitor where we go, what time we fall asleep and even whether we’ve washed our hands for a full 20 seconds. At the same time, people share digital breadcrumbs online about the diets we try, what TV shows we binge and the tweets we love. For many of us, our lives already are an open book.

Those details are more powerful than brain data, says Anna Wexler. She’s an ethicist at the University of Pennsylvania in Philadelphia. “My email address, my notes app and my search-engine history are more reflective of who I am as a person — my identity — than our neural data may ever be,” she says.

How private should our brains and thoughts be?

Right now, Wexler says, it’s too early to worry about brain tech intruding on our privacy. But many people do not share that opinion. “Most of my colleagues,” she admits, “would tell me I’m crazy.”

Yuste and others who would like to see strict laws to protect our privacy. They would like someone’s brain-cell data safeguarded, just as our organs are. No one can remove someone’s liver without approval for medical purposes. These researchers would like to see neural data given the same protections.

That viewpoint has gained acceptance in the South American nation of Chile. It is now considering whether to set up new protections to guard neural data so that companies cannot get at your data without your permission.

Other experts fall somewhere in the middle. Ienca, for example, thinks people ought to have the choice to sell or give away their brain data. They might do it in exchange for a product they like, or even just for cash. “The human brain is becoming a new asset,” he says. He’s fine with it becoming something that can bring big profits to the companies eager to mine these data.

If someone is well-informed about what they are selling or giving away, then he thinks they should have the right to sell their data, or exchange it for something they want.

But figuring out how to manage the data from someone’s brain won’t be easy, says Rommelfanger at Emory University. General rules and guidelines are not likely to be the way to go, she says. More than 20 frameworks, guidelines, principles have been developed to handle neuroscience, she says. Many tackle such things as “mental privacy” and mental “liberty” — the freedom to control your own mental life.

Such guidelines are thoughtful, Rommelfanger says. Still, technologies differ in what they can do and what their possible impacts will be. For now, she says, one-size-fits-all solutions don’t exist. Instead, each company or research group may need to work through ethical issues as their use of brain data progresses. She and her colleagues have recently proposed five questions that researchers can ask themselves to begin thinking about these ethical issues. Their questions ask people to consider how new technology might be used outside of a lab, for instance.

What would you like to tell the scientists working in this area? Send your thoughts to feedback@sciencenews.org.

Moving forward on developing the technology is essential, Rommelfanger believes. “More than my fear of a privacy violation, my fear is about diminished public trust that could undermine all of the good this technology could do.”

Not being clear on the ethics of mining brain data is unlikely to slow the pace of the coming neurotech rush. But thoughtful consideration of whether it’s appropriate to do so could help determine what’s to come. It could also help protect what makes us most human.

This project on ethics and science was supported by the Kavli Foundation.